|

I need to get all of the images from one website that are contained all in one folder. Like for instance, (site.com/images/.*). Is this possible? If so, whats the best way?

Automatic Image Downloader is the ultimate tool that allows you to download wallpapers/images from any website, at bulk, with just one click. Don't worry: the program will filter dull (low resolution images) for you. Download Bulk Image Downloader here. Download full sized images from almost any web gallery. Supports flickr, imagevenue, imagefap, and most other popular image host sites. Verdict: 'Bulk Image Downloader is an incredible program. It works out of the box and the developer’s are making sure that it stays that way. Jan 5, 2018 - Q. A previous column explained how to download specific files from Google. Google Photos can be set to automatically back up all the pictures you take. If you have a folder of images on your computer that you want to. Hi All, i have done small utility to save all the images from the website to out local folder. SaveImage.xaml (11.2 KB) Regards, Arivu. The only way that worked was using something like Internet Download Manager, that has the option to make a batch download. It has a wild card ( * ) and it can look for all the files in that folder.

True blood season 1 download utorrent torrent. But soon it leads to the fact that there is a huge bitch number of enemies, from those who vehemently hates vampires. Once the girl acquainted with vampire named Bill Compton and they become friends. The waitress Sookie Stackhouse, working in one of the local bars, has telepathic abilities and knows like no one else how it is to be different from others, and to be an outcast.

bryan sammon

2,7151212 gold badges3232 silver badges4141 bronze badges

5 Answers

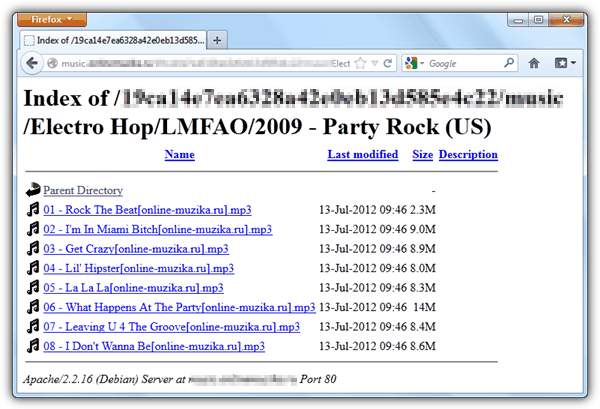

Have a look at HTTrack software. It can download whole sites. Give website address

site.com/images/ and it will download everything in this directory. (if the directory access is not restricted by owner)

3,47466 gold badges3636 silver badges6565 bronze badges

Do you have FTP access?

Do you have shell access?

With Linux it's pretty easy. Not sure about windows.

Edit: Just found wget for windows.

Edit 2: I just saw the PHP tag, in order to create a PHP script which downloads all images in one go, you will have to create a zip (or equivalent) archive and send that with the correct headers. Here is how to zip a folder in php, it wouldn't be hard to extract only the images in that folder, just edit the code given to say something like:

rich97rich97

1,44911 gold badge1919 silver badges3333 bronze badges

if the site allows indexing, all you need to do is Reese MooreReese Moore

wget -r --no-parent http://site.com/images/

10.2k33 gold badges1818 silver badges2929 bronze badges

Depends if the images directory allows listing the contents. If it does, great, otherwise you would need to spider a website in order to find all the image reference to that directory.

In either case, take a look at wget.

OrblingOrbling

18.4k33 gold badges4444 silver badges6161 bronze badges

If you want to see the images a web page is using: if you are using Chrome, you can just press F-12 (or find Developer Tools in the menu) and on the Resources tab, there's a tree on the left, and then under Frames, you will see the Images folder, then you can see all the images the page uses listed in there.

live-lovelive-love

19.6k1010 gold badges9292 silver badges9090 bronze badges

Not the answer you're looking for? Browse other questions tagged phpjavascriptlinuxperlimage or ask your own question.

I have been using Wget, and I have run across an issue.I have a site,that has several folders and subfolders within the site.I need to download all of the contents within each folder and subfolder.I have tried several methods using Wget, and when i check the completion, all I can see in the folders are an 'index' file. I can click on the index file, and it will take me to the files, but i need the actual files.

does anyone have a command for Wget that i have overlooked, or is there another program i could use to get all of this information?

site example:

www.mysite.com/Pictures/within the Pictures DIr, there are several folders...

www.mysite.com/Pictures/Accounting/

www.mysite.com/Pictures/Managers/North America/California/JoeUser.jpg

I need all files, folders, etc...

Download Unlimited ✪ 60000 Exclusive, Unreleased mp3 Tracks, Unique. TECH HOUSE MUSIC 2019, TECH HOUSE TOP 100 TRACKS, BEST TECH. Beatport top 100 techno download torrent.

Der Hochstapler

69.2k5050 gold badges236236 silver badges288288 bronze badges

Horrid HenryHorrid Henry

3 Answers

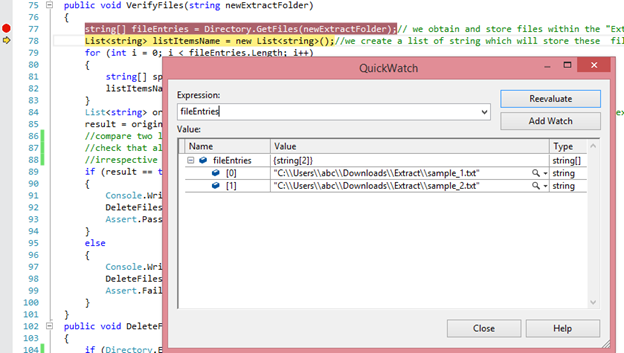

I want to assume you've not tried this:

or to retrieve the content, without downloading the 'index.html' files:

Reference: Using wget to recursively fetch a directory with arbitrary files in it

Community♦

Felix ImafidonFelix Imafidon

I use

wget -rkpN -e robots=off http://www.example.com/

-r means recursively

-k means convert links. So links on the webpage will be localhost instead of example.com/bla

-p means get all webpage resources so obtain images and javascript files to make website work properly.

-N is to retrieve timestamps so if local files are newer than files on remote website skip them.

-e is a flag option it needs to be there for the robots=off to work.

robots=off means ignore robots file.

I also had

-c in this command so if they connection dropped if would continue where it left off from when i re-run the command. I figured -N would go well with -c

Tim JonasTim Jonas

wget -m -A * -pk -e robots=off www.mysite.com/this will download all type of files locally and point to them from the html file

and it will ignore robots file Download All Images From Website Directory For Mac

Abdalla Mohamed Aly IbrahimAbdalla Mohamed Aly Ibrahim

Download Images From Website EdgeNot the answer you're looking for? Browse other questions tagged wget or ask your own question.Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed